What is big data anyway? Data is being constantly generated everywhere around us. Think of everything you do throughout the day, and how you interact with digital systems. Perhaps you stop at a coffee shop every morning around 8:05 AM and order an espresso. Or maybe you usually go to the supermarket on Thursday evening. Think of the purchases you make with a credit card, and how they could be categorized. All of these events generate data. Big data. Lots of data. Companies can use technologies like Apache Hadoop to make sense of all this unstructured data, and provide personalized advertising to their customers, as an example. Think of healthcare. Research organizations can collect data on patients conditions, and try to make sense of it to find common links. As genome sequencing becomes more affordable, big data can help researchers find new correlations between conditions and genomics, leading to more targeted therapies for conditions and more personalized medicine. Big data is all around us, and is growing bigger every day. Organizations are constantly examining all of the data they’ve been collecting for years, but never quite knew what to do with it. Apache’s Hadoop is one way to make sense out of this unstructured data.

The Journey to the Sahara

Sahara is OpenStack’s answer to the big data question. Sahara became an integrated project in the Juno release, and actually started out as something called Savanna. Hortonworks, Mirantis, and Red Hat have all contributed significantly to the effort of the program. A name change since the Icehouse release (Savanna) could be confusing to some, and you will see some interoperability code which refers to the legacy name. Despite the seemingly low version number (0.3) on the program, there has been a lot of development in this area, as you can see from the release notes from 0.3.

When we think about something like Hadoop, and something like OpenStack, it is easy to see how well suited they are for eachohter. The ability to provision a hadoop environment from a Heat is a beautiful and powerful thing. Imagine buying hardware for Hadoop, big servers with lots of memory and processing power, and being able to re-purpose them for a different set of data over and over again. It may even be a case for running your hadoop nodes with Ironic, or OpenStack’s bare metal provisioning service. The possibilities become endless, and they continue to use a toolset you are used to for operating your cloud.

The Safari Begins

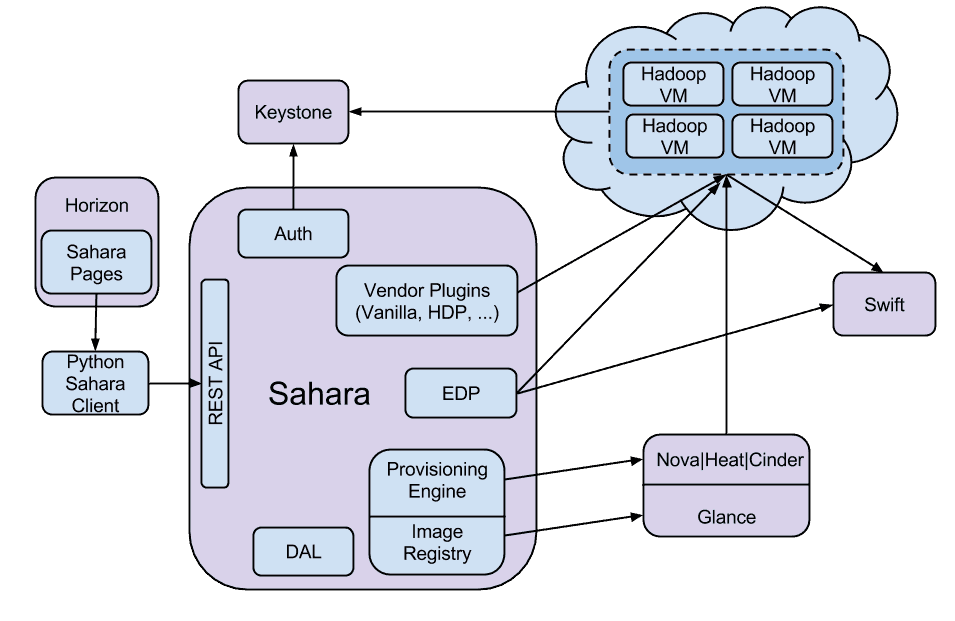

Let’s look at how Sahara deploys a Hadoop environment. Saraha, like all other OpenStack programs is managed through its own dedicated REST API. It relies heavily on other OpenStack programs, like Horizon, for ordering your Hadoop environment, Keystone, so OpenStack knows you’re allowed to have it, Glance to deploy the images we’ve already set up, and finally Nova to run your node and Swift to provide a place for Hadoop to process the data. Sahara is also responsible for creation and managing of the Hadoop cluster. Sahara will be able to leverage cluster templates, making it easy, for example, for you to deploy an the same environment on a monthly basis with a different data set.

Big Data is Bigger Than You Think

When we talk about big data, we do mean big. Think of how fast this unstructured data grows, easily into terabytes and petabytes and even exabytes. Organizations have stored transaction data for many reasons, regulatory requirements, the ability to do trend reporting, and well, why not? The ability to make sense of this unstructured data also provides unique opportunities that organizations are just beginning to realize.

Many companies you are familiar with use Hadoop. E-Bay, Facebook, Google, Hulu, LinkedIn, LastFM, the organizations go on and on. Apache encourages organizations to add information to their PoweredBy page, and a read through it will give you an idea of how many types of organizations this technology spans.

Whether you’re already diving into Big Data today, or planning to build out a Big Data platform in the future, OpenStack is preparing to be the place that it lands. There is some great work happening on the Sahara program, and with the Kilo release in April we can expect to see a lot more development around this area.

Song of the Day – Imagine Dragons – I Bet My Life

Melissa is an Independent Technology Analyst & Content Creator, focused on IT infrastructure and information security. She is a VMware Certified Design Expert (VCDX-236) and has spent her career focused on the full IT infrastructure stack.

Recap #vDM30in30 – The Really, Really Long List, Enjoy! @ Virtual Design Master

Friday 28th of November 2014

[…] Sahara, OpenStack’s Answer to the Big Data Question […]