When it comes to vSphere HA, there is a couple of key concepts. Mainly, Admission Control and fail over policy settings, as well as Host Isolation Response. First of all, what exactly is vSphere HA anyway? High Availability is the mechanism that protects virtual machines against hardware failures, by restarting them on a different host in the cluster if the host they are running on fails.

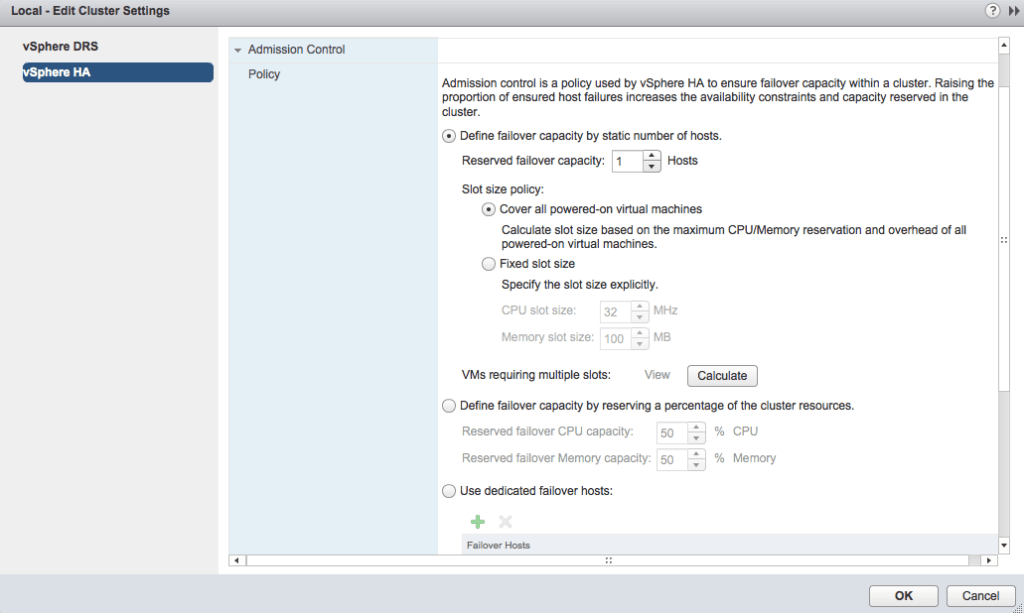

Admission Control for HA is the policy that vSphere uses to ensure that you have your desired amount of failover capacity in the environment. There are three methods to do this.

(No, we aren’t going to talk about this kind of slot…)

Define failover capacity by static number of hosts.

This is actually what I used to use in some cases, an 8 node cluster either surviving 1 or 2 host failures. Something important to consider here is the notion of a HA slot in vSphere. This is something that can be an automatic value, or manually configured. HA slots are calculated by using CPU and Memory reservations on VMs, with the most restrictive determining your number of slots. Think about this for a moment. If you’re running a cluster with a number of Monster VMs, and some smaller ones, your HA slot size is going to be the size of your Monster VMs, unless you manually change it. I think if you’re going to use this method, you really need to have a solid understanding of the reservations in your environment.

Eric Shanks has a great post on HA slot sizes if you are looking for more in depth information, and Duncan Epping has everything you ever wanted to know about HA over at his blog and in his book.

Define failover capacity by reserving a percentage of the cluster resources.

I asked over Twitter what Admission Control methods people liked to use, and a number of people mentioned this one. If I ever turned off Admission Control, I ended up using a more manual method of this, buy calculating the % of resources available on each host in the cluster. You can determine what amount of resources you want to leave free, and have that translate to how many host failures you would want to survive in your cluster.

Use dedicated failover hosts.

This method does exactly what it sounds like it does, you specify which host you would like to use for failover. Keep in mind if you use this, the failover hosts will not have any VMs running on them, so your working hosts will run with a higher resource utilization.

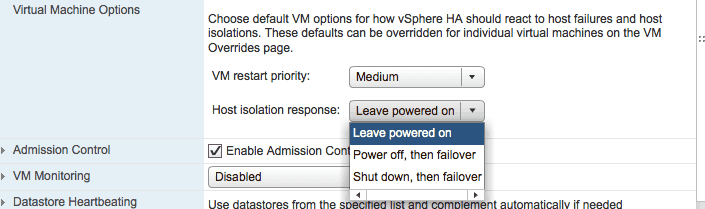

Now, that leaves us with Host Isolation Response. What do you want your host to do when it is isolated from the network? In previous versions of vSphere you were able to chose from Leave powered on, Power off, or Shut down, now, while they do basically the same thing, your options are named slightly differently. Just something I noticed moving to vSphere 5.5.

Leave powered on leaves your VMs running if your host is isolated, while Power off, then failover powers your VM down hard before HA restarts it, and Shut down, then failover shuts the VM down before HA restarts it. Which one to use, when? Well, this answer, like just about everything else in vSphere and technology “depends”. I had a particular cluster that for some reason had hosts that just loved becoming disconnected from vCenter, I chose Leave powered on because I didn’t particularly want a HA event every time the host went out to lunch. You can also set this option individually on VMs.

When you create a HA cluster, one of your hosts will become the master host of the cluster. The master host is responsible for monitoring the other hosts in the cluster, the VMs in the cluster, and reporting back to vCenter.

HA uses your management network for heat beating. Besides the fact that it is always good to have management network redundancy, this will only further enhance HA. You can add another vmk to a vSwitch you are not using for management, and enable it for management traffic, or you can ensure that you have multiple uplinks on your management vSwitch. Additionally, the master host will also use datastores for heartbeat if it cannot communicate over the management network. This is to ensure that the host really has in fact failed before kicking off a HA event. By default, HA will use two datastores for heatbeat, and this can be increased up to 5 in the advanced settings for HA.

That’s HA in a nutshell. I highly recommend reading the vSphere 5.5 Availability Guide to cover anything I did not. This is actually a really good document and should refresh everyone on their HA, as well as their DRS which will becoming soon as a post of its own.

Melissa is an Independent Technology Analyst & Content Creator, focused on IT infrastructure and information security. She is a VMware Certified Design Expert (VCDX-236) and has spent her career focused on the full IT infrastructure stack.