When I talk to people who are new to the world of VMware virtualization, I have noticed VMware vMotion and DRS (Distributed Resource Scheduler) can be confusing topics. They are distinctly different technologies that do different things, and the terms vMotion and DRS cannot be used interchangeably.

That doesn’t mean they don’t work together! Under the covers, DRS utilizes vMotion.

When it comes to these two VMware features, there are also different types of each one! While both vMotion and DRS have been around for quite sometime, they are constantly being enhanced and gaining new functionality.

Let’s take a look at what these two technologies are, and how they compliment each other.

What is VMware vMotion and how does it work?

VMware vMotion is pure magic. It allows a virtual machine to change where it is running without any downtime. For example, I can move a virtual machine from one ESXi host to a different ESXi host and my users will not notice. vMotion is one of those things that has been constantly enhanced with new vCenter and ESXi releases through the year.

As a history lesson, VMware vMotion first debuted in Virtual Center 1.0. Be sure to check out the section on Migrating Virtual Machines in the Virtual Center 1.0 Documentation. You will also notice vMotion used to be stylized with a big v, as VMotion.

vMotion did have some requirements that we are all familiarly with still today, such as shared storage, shared networking, and a network to use for the transfer of the virtual machine state.

How Does vMotion Work?

vMotion works by transferring the virtual machine from one host to another without any disruption to the virtual machine or applications it is serving. When you read about vMotion, you will read about the state of the virtual machine.

The state of the virtual machine is the contents of memory (what the virtual machine is actively doing), and the information on the virtual machine that makes it unique. According to VMware’s vSphere 6.7 vMotion Documentation, this is:

This information includes BIOS, devices, CPU, MAC addresses for the Ethernet cards, chip set states, registers, and so forth.

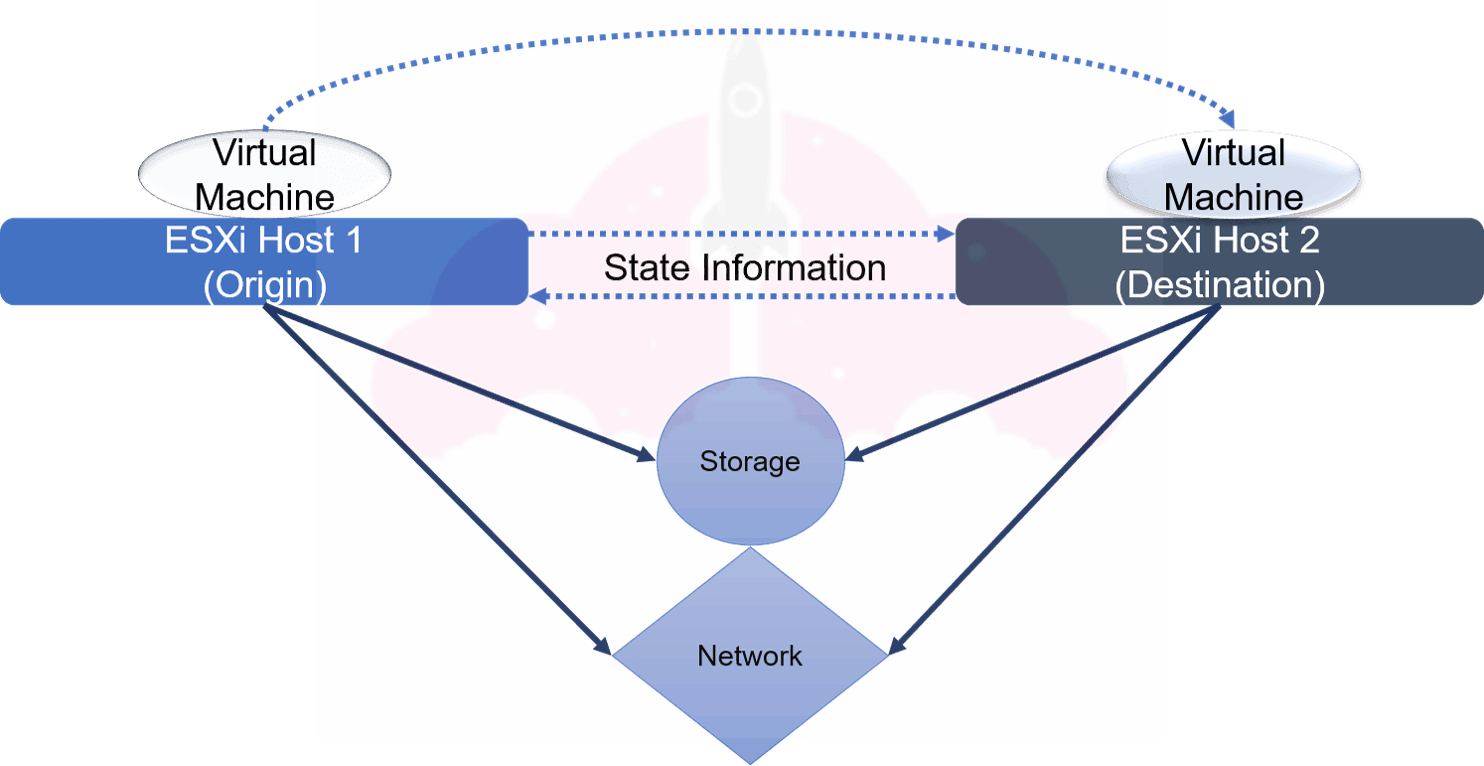

We are transferring everything that makes our virtual machine our virtual machine! Take a look at this diagram:

On ESXi Host 1, we have our virtual machine in its origin. As part of the vMotion operation, which moves the virtual machine from ESXi Host 1 to ESXi Host 2, we are transferring all of the information about our virtual machine between the ESXi hosts, including the contents of the virtual machine’s memory. Once the state has been transferred, the virtual machine will be running on ESXi Host 2, the destination.

Remember, this is a classic vMotion, so two important required components are shared storage and shared networking. We will talk more about vMotion requirements soon.

How Does Storage vMotion Work?

While vMotion focuses on the state of the virtual machine, Storage vMotion is all about, well, the storage. It allows you to move the virtual machine’s files between datastores while a virtual machine is running.

There are a couple of cases where this feature is extremely handy, as mentioned in VMware’s Storage vMotion documentation. For example, you can move a virtual machine to a new datastore to migrate off an old storage array, or to a datastore with faster disks if you need better performance.

Since virtual machines can stick around for a long time, features like vMotion and Storage vMotion make it easy to migrate virtual machines between hosts, clusters, storage arrays, etc.

Types of VMware vMotion

Today, when you migrate a virtual machine, you have many more options than you did when vMotion made its debut in 2003.

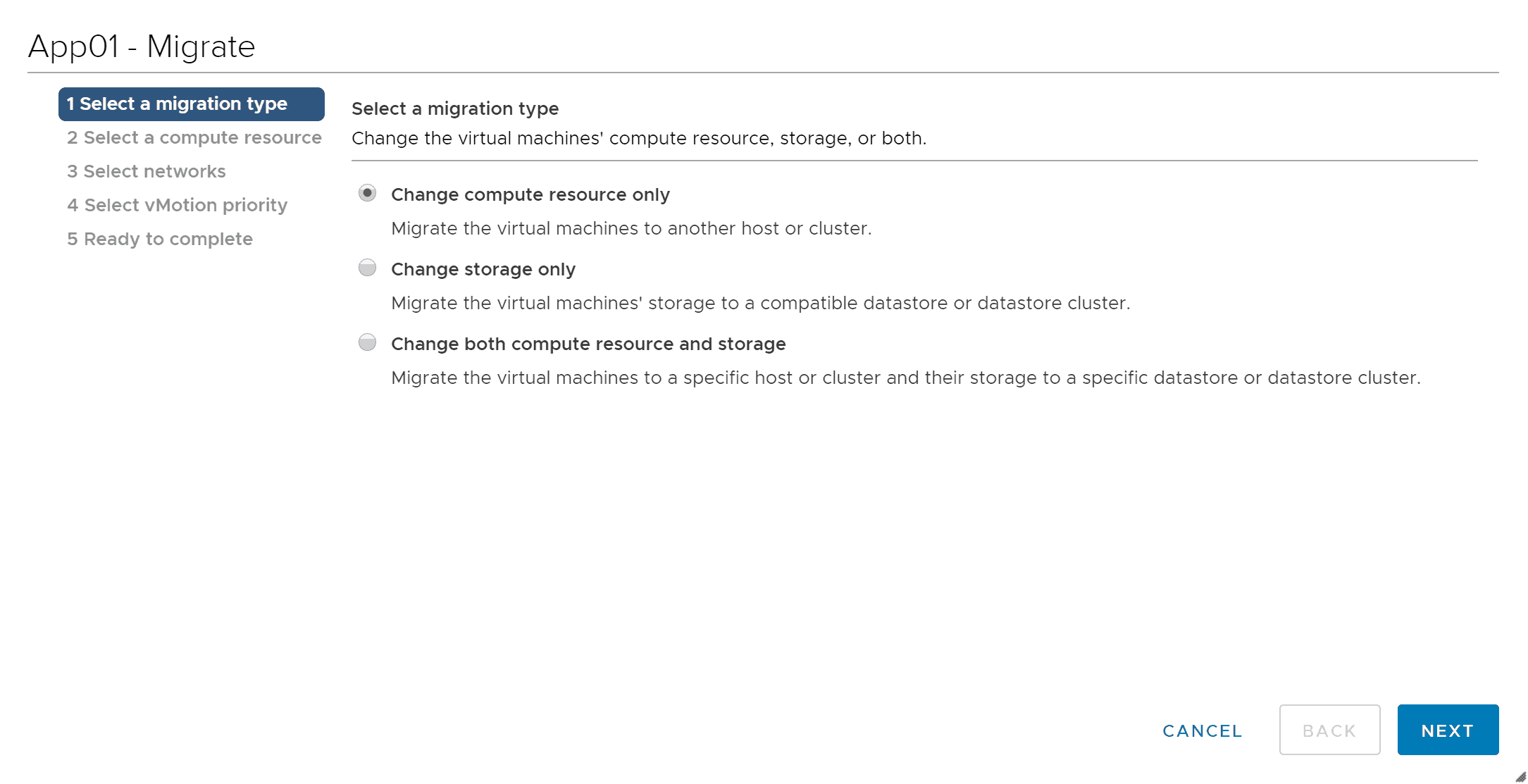

If you right click a Virtual Machine in vCenter, you will see the above options. Let’s take a walk through of the different types of vMotion using this as our basis.

- vMotion. Change the compute resource of a VM while it is online (Change compute resource only)

- Storage vMotion. Change the location of the virtual machine’s disks while it is online (Change storage only).

- Change both compute resource and storage. A vMotion mashup allowing you to change both the host and datastore at the same time. This can be handy when moving a virtual machine to a new cluster that does not share storage resources with the previous cluster.

Impressive, right? That is just the beginning of what vMotion can do. vMotion can also:

- Migrate between vCenters. This functionality was added in vSphere 6.0. Previously, you had to do all sorts of weird stuff to live migrate a virtual machine between vCenters. You can read more about cross vCenter vMotion here.

- Long Distance vMotion. Also released in vSphere 6.0, long distance vMotion is a vMotion operation that happens with a latency of more than 4 ms. Think vMotion across sites. As long as your round trip latency is less than 150 ms, you are good to use vMotion across large distances.

- Encrypted vSphere vMotion. Beginning with vSphere 6.5, vMotion supports encrypted virtual machines. However, it is important to note that if a disk is not encrypted, Storage vMotion will not encrypt the disk during transfer.

As you can see, vSphere 6.0 and vSphere 6.5 were big releases when it comes to VMware vMotion.

VMware vMotion Requirements

Things can get a little confusing when we talk about vMotion requirements, since there are so many different flavors of vMotion.

Starting with classic vMotion, we will need shared storage and shared networking between our source and destination ESXi hosts. This makes sense, because we want to make sure our virtual machine can access the same resources and still communicate when it gets to its new home When I was first getting started learning VMware, I always would test vMotion between all my hosts after I built a cluster. If there was a configuration error, I would figure it out at that point.

Another important part of this uniform configuration is creating a VMkernel port enabled for vMotion on each host. This is the network the vMotion data is transferred over. Networking may be the trickiest part of vMotion, and the vMotion Networking Requirements KB from VMware is a great place to get started understanding how it functions.

One way to make sure your ESXi host configuration is always the same between hosts is to use VMware host profiles. This makes it easy to ensure you are meeting the requirements for vMotion.

When we start to use more advanced vMotion functionality, we will also see the requirements change. The best place to get the latest information is always the Official VMware Documentation and the VMware KB.

Here are some things to consider for other types of vMotion:

- Cross vCenter vMotion Pay special attention to the requirement of each vCenter being in Enhanced Linked Mode for versions of VMware earlier than 7 U1c.

- Long Distance vMotion Requirements. Remember, you must have a round trip latency of less than 150 ms.

Both of these enhanced vMotion features require a vSphere Enterprise Plus license.

Now, let’s move on to our next topic, which is VMware DRS.

What is VMware DRS?

VMware DRS stands for Distributed Resource Scheduler. While vMotion came out with ESX 3.0 / Virtual Center 2.0, DRS came out with ESX 3.5 / Virtual Center 2.5. It debuted next to vSphere clusters and vSphere HA. You may also see this referred to as vSphere DRS since it really is a vSphere feature.

Feeling nostalgic? Be sure to check out 13 Things Only Early VMware vSphere Administrators Remember!

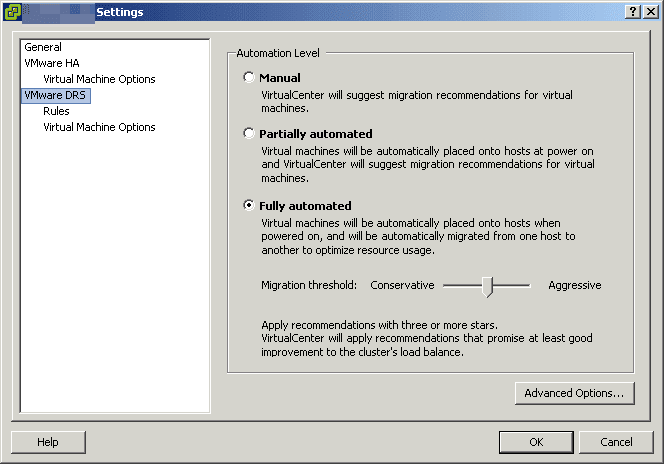

How DRS works in VMware

DRS in VMware is part of the brains of your VMware vSphere Cluster. It takes a look at the hosts in your cluster, and balances out the CPU and Memory utilization between them. If one host becomes overloaded, it will spread out workloads to ensure performance is not impacted.

This is a very simple explanation of what VMware DRS does. It is also one of those VMware features, like vMotion, that is constantly being updated and expanded upon.

Types of VMware DRS

Beyond the classic DRS that balances CPU and Memory resources across a cluster, there are even more DRS features:

- Storage DRS (SDRS). Balances the datastore utilization across datastores. It examines both capacity and performance metrics.

- Predictive DRS. Combines the power of vSphere 6.5+ and vROPS 6.4+ to proactively move workloads before a resource imbalance happens.

- Elastic DRS. In VMware Cloud on AWS, Elastic DRS dynamically expands and contracts your cluster by adding and removing ESXi hosts as needed.

At the heart of all types of DRS is an algorithm which is keeping an eye on performance metrics, then moving things around as needed to achieve an optimal configuration. Like vMotion, DRS saw major enhancements in vSphere 6.5. One of these enhancements was an algorithm change which began to take network utilization into account for host performance.

Are you wondering how this movement happens? I think you already know the answer if you have been paying attention!

How VMware vMotion and VMware DRS Work Together

vMotion is what allows things to be moved around non-disruptively in a vSphere environment, and DRS is what decides how to move things around to give the best performance.

When DRS needs to move something, it simply uses vMotion! While these are completely separate technologies, they compliment each other nicely. This is where the confusion comes from, since they work together so closely.

VMware DRS Requirements

Like VMware vMotion, VMware DRS also has some requirements. Since it is part of a vSphere Cluster, you must have a valid ESXi cluster to turn on DRS. Some of these requirements will be familiar such as:

- Shared storage

- Compatible processors on ESXi hosts

- vMotion enabled

You can read more about the requirements and considerations for a DRS cluster at VMware Docs.

VMware vMotion and DRS, Perfect Together!

While vMotion and DRS may be different technologies, they are perfect together! You can have vMotion without DRS, but you cannot have DRS without vMotion. Both of these features ensure your VMware vSphere environment is performing optimally, and allow you to move workloads around without impacting your applications and users how you see fit. vMotion and DRS have come a long way since their initial releases many years ago, and are sure to continue evolving.

Melissa is an Independent Technology Analyst & Content Creator, focused on IT infrastructure and information security. She is a VMware Certified Design Expert (VCDX-236) and has spent her career focused on the full IT infrastructure stack.