I recently was setting up a lab, and my goal was to get it up and running as quickly as possible to do some testing. I think we have all been in this boat at one time or another. The setup I was working with was a small nested vSphere environment and the NetApp simulator.

It has been some time since I configured a NetApp, so I thought I would take a few minutes and write a quick and dirty guide to getting started with NetApp NFS and VMware.

Disclaimer: These are not best practices. They may actually be worst practices, but these are the general things you need to do to get up and running quickly. Always sit down down and plan a production deployment based on requirements.

Why NetApp NFS and VMware?

Because in my opinion NFS is the quickest way to get NetApp storage presented to your VMware hosts, and that is really all I wanted.

I used to work for NetApp, because I like the way VMware and NetApp worked together so much. It was the main reason I became a NetApp SE years ago. I helped customers do some pretty cool things with NetApp NFS storage and VMware during my time there.

The following are the basic steps you will have to follow to get up and running with VMware and NetApp quickly. It does not really matter if you start with VMware or NetApp first, because there are steps you need on each cluster before you can actually present storage.

Set Up Your VMware vSphere Environment for NFS

I am going to start with the VMware vSphere side because well, why not. Here are the general things you need to do in vSphere:

- Create a VMkernel port for NFS access

- Mount the NetApp storage

Easy, right?

Creating a VMkernel Port for NFS Access on ESXi Hosts

You will need to create a VMkernel port for NFS access on ESXi hosts. Before you do this, you need to decide what network you will be using for NFS.

My lab already has a NFS VLAN so I got off easy here. I connected a NIC on my nested ESXi host to this VLAN before I did any configuration.

I chose standard vSwitches because for no good reason at all other than I had some PowerCLI stashed away and I found that before I found what I had for distributed virtual switches.

I wrote a series on PowerCLI and networking which you can find the beginning of here, but here is the PowerCLI I used for each ESXi host:

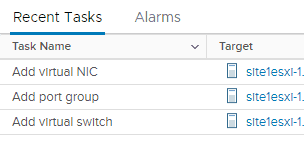

New-VirtualSwitch -VMHost host.domain,com -Name vSwitch1 -nic vmnic2 -mtu 1500

New-VMHostNetworkAdapter -VMhost host.domain.com -PortGroup NFS -VirtualSwitch vSwitch1 -IP X.X.X.X -SubnetMask X.X.X.X

Ding, hosts are done!

The next step for your VMware environment is to mount the storage, but you must configure your NetApp first.

Configure NetApp to Serve NFS Datastores to VMware

Luckily the cluster setup wizard now takes care of all the annoying stuff when you deploy NetApp ONTAP. The basic things you will need to do to your NetApp are:

- Create a SVM with the NFS protocol

- Start the NFS service (Don’t forget this!)

- Create a LIF and tie it back to the NFS SVM

- Create a volume

- Create an export policy

- Map export policy to Volume

- Configure any addirional any storage efficiencies or data protection

That’s pretty much it. I did this all manually because, well I don’t have a good reason. I ended up deploying a new virtual NetApp for something else that needed iSCSI and used Ansible for the whole configuration. It was magical.

Configuring Your NetApp With Ansible

I am not going to share my code right now, since everything I did was for iSCSI anyway, and it is generally pretty sloppy looking. It probably isn’t 100% proper, but hey, it worked and that was good enough for me.

What I can tell you is everything you ever needed to know about NetApp and Ansible is already there for you, you just have to piece it together.

I found this amazing blog series on NetApp.io that had me up and running in a few hours. Mostly because I had never used Ansible before. You can use Ansible for as little or as much as you want to.

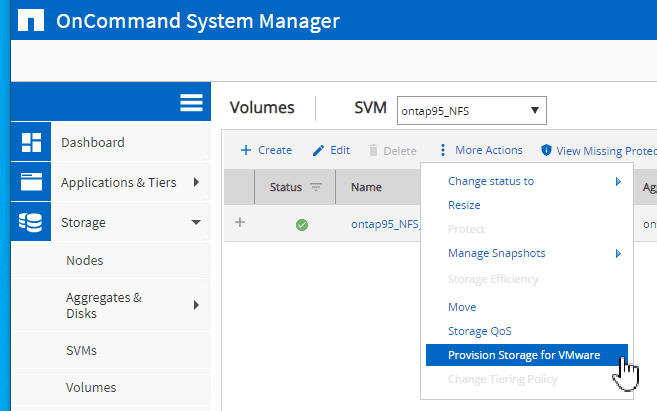

Provisioning Storage for VMware Through NetApp System Manager

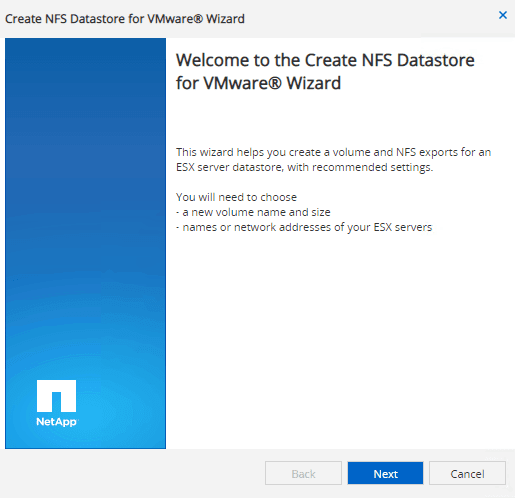

There’s a cool feature I noticed that allows you to provision NFS Storage for VMware. It takes care of some of the things that you may forget, like your export policy which is where people most commonly mess things up.

You can find this handy wizard right here:

This is built right into System Manager, no vCenter plugins or anything fancy or extra required.

This is hands down the quickest and easiest way to get storage into your VMware environment from your NetApp. Follow the handy wizard.

This is hands down the quickest and easiest way to get storage into your VMware environment from your NetApp. Follow the handy wizard.

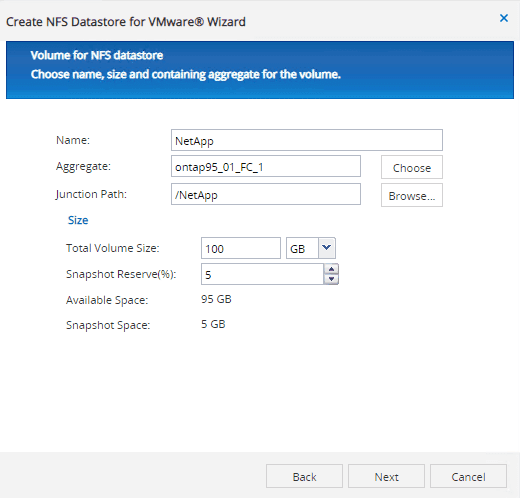

It pre-popluated some garbled non-sensical name for the volume name. I changed it to what I wanted, but it did not immediately update the junction path, so I clicked browse to fix this.

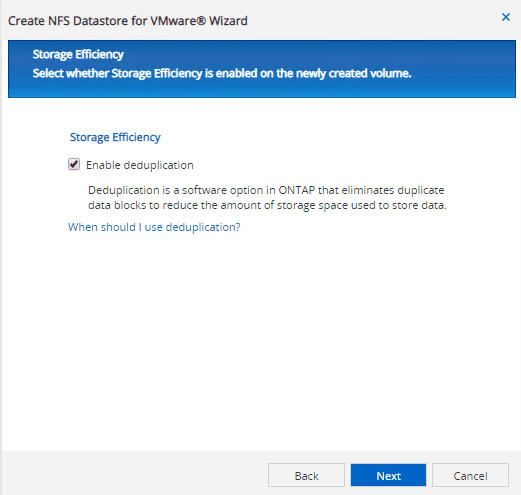

You can also enable deduplication for your NetApp NFS VMware datastore right through this handy wizard.

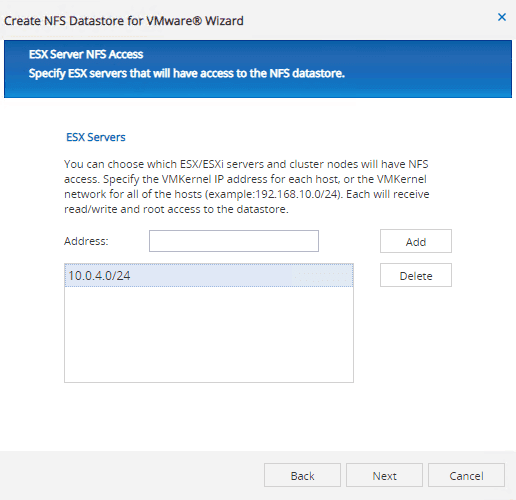

The wizard asks for the NFS IPs of your ESXi servers so it can create and map the export policy for you. Here is one of those worst practices I mentioned. I just opened up access to this datastore to every host on that subnet. A more secure choice would be to add each ESXi’s NFS VMkernel port individually.

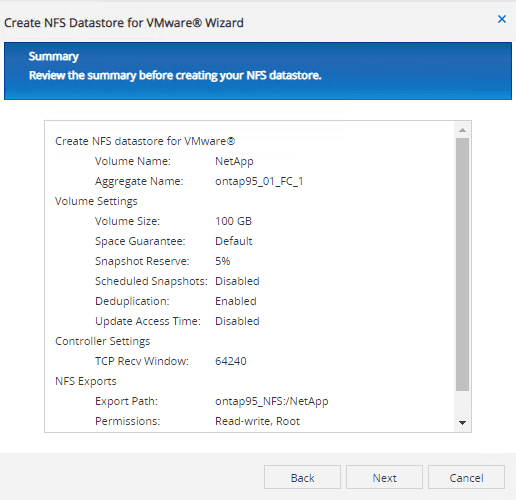

Can you believe we are almost done with this wizard? It just did all the stuff that can be painful (and error prone) when setting your NetApp for VMware datastores.

Here is the summary of what we just did:

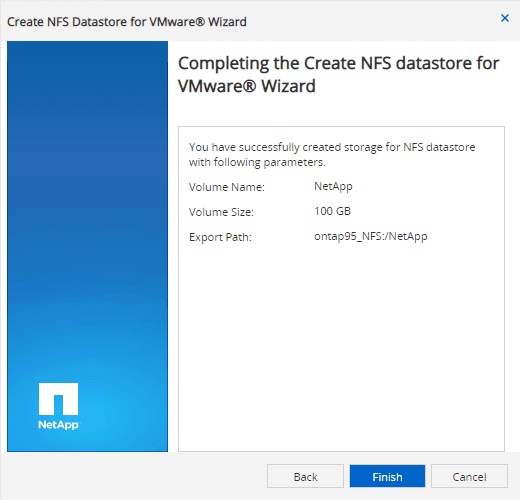

We are good to go.

Seriously. That’s it. Your storage is ready to be mounted into your ESXi hosts.

Mount NetApp NFS Datastore in ESXi

After we have both our vSphere ESXi hosts and NetApp ONTAP cluster configured, we are now ready to mount a NFS datastore to ESXi.

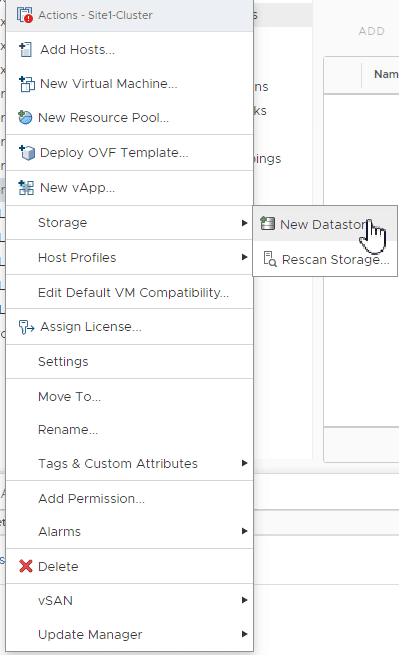

There are, of course, several ways to do this. First of course is the vSphere client, which is not a bad choice.

Simply right click a cluster, and select New Datastore from the Storage menu.

This will bring you through a handy wizard to help you mount the storage.

Pay special attention to Step 4 which is Host Accessibility. At this point in the Wizard you have the option to select every host you would like the datastore mounted to, which will be all the hosts in your cluster.

This is much easier than having to mount the datastore to each host in the cluster.

On the other hand, you can also use PowerCLI.

Get-VMHost host.domain.com | New-Datastore -Nfs -Name nfs01 -Path /volumepath -NfsHost X.X.X.X

I used this line when I just wanted to connect my volume to a single host to test something quickly. It was quite handy since I already had PowerCLI open.

Like with any other task in VMware vSphere, there are many ways to accomplish the same thing.

Summary of Configuring NetApp NFS Storage for VMware ESXi

To configure NetApp NFS storage for VMware vSphere, you need to do a couple of things:

- Configure a VMkernel port on ESXi hosts for NFS storage access

- Configure NetApp to serve NFS storage

- Create volume for VMware ESXi hosts

- Mount NetApp volume to ESXi hosts

That’s about it! You should be ready to roll with your new NFS based NetApp datastores.

Melissa is an Independent Technology Analyst & Content Creator, focused on IT infrastructure and information security. She is a VMware Certified Design Expert (VCDX-236) and has spent her career focused on the full IT infrastructure stack.