I started working with the Cisco Unified Computing System, more commonly known as Cisco UCS very soon after its initial release. Cisco UCS is an awesome and powerful server platform that is a great fit for virtualization and pretty much any workload you can imagine. What I also realized is that with all of my UCS experience, and all of the blogging I have done, I have never sat down and written an overview of Cisco UCS architecture. Why is this important you ask? After all, it is just a server platform. It is important because Cisco UCS has a fundamental difference between most other server platforms you are used to working with.

In this article we will talk about the following:

- The Main Cisco UCS Architectural Difference

- Overview of Cisco UCS Components

- Overview of Cisco UCS Architecture (How the Components Connect)

Buckle up, because you are about to learn more than you ever wanted to know about Cisco UCS! Be sure to take a look at the complete list of Cisco UCS Deep Dives I have written at the end of this article.

The Fundamental Difference With Cisco UCS Architecture

Let’s dive right into it. What is the big difference when you are designing a Cisco UCS System? Cisco UCS leverages something called a Fabric Interconnect. While you may be used to connecting servers directly to a switch, this is not what is done in the Cisco UCS world. With Cisco UCS, servers are connected to the Fabric Interconnect, then the Fabric Interconnect is connected to the network switches.

The Cisco Fabric Interconnect is ALWAYS deployed in pairs, and each server or blade chassis is connected to both Fabric Interconnects to protect against Fabric Interconnect failure. It also makes maintenance easy, since you can update the software on the Fabric Interconnects one at a time non disruptively.

This special software is called Cisco UCS Manager, which we are going to talk about more soon, so stay tuned!

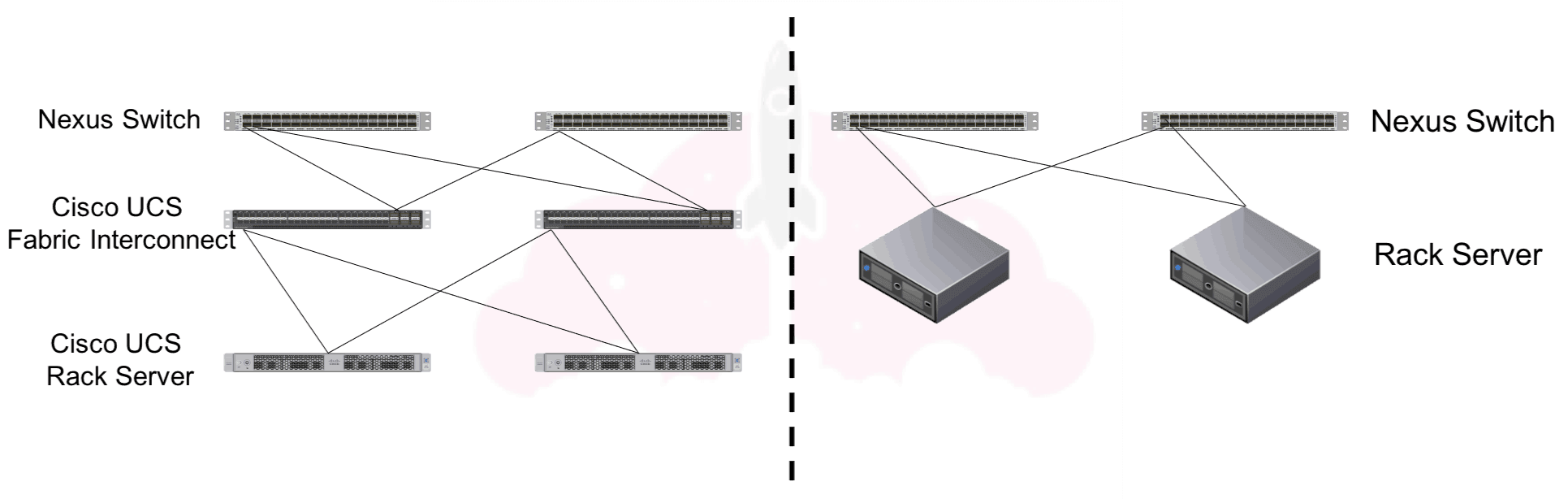

This diagram compares a very basic Cisco UCS system to a regular old rack system:

As you can see, the main difference is the introduction of the Cisco UCS Fabric Interconnect.

I also probably just busted the first myth about Cisco UCS. While many people do deploy blade servers in their Cisco UCS systems, rack servers are also a part of Cisco UCS. Blade or rack, you decide what fits your requirements and your use case. For some reason, I always run into people that think Cisco UCS is only about blade servers.

Overview of Cisco UCS Components

Now, we are going to break down the components of the Cisco UCS system before I show you how they all come together.

Cisco UCS Fabric Interconnects

The Cisco UCS Fabric Interconnect exists to connect your Cisco UCS Servers to to your network. Beyond this, the Fabric Interconnects are where Cisco UCS Manager is run from, which is the software secret sauce for Cisco UCS. When you are logging into your Cisco UCS system, you are actually logging into Cisco UCS Manager via the Fabric Interconnects.

Each Fabric Interconnect of course has a unique IP address, but a VIP (virtual IP address) is also created for the system as a whole. Often, you may just hear these referred to as fabrics. Usually, we call one Fabric Interconnect Fabric A, and the other Fabric B.

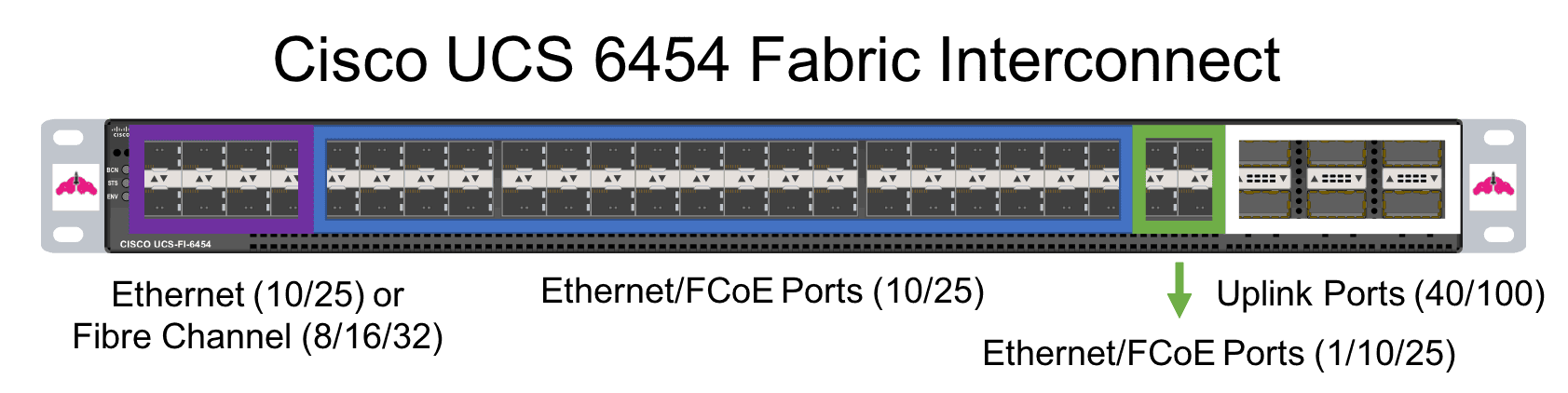

One of the coolest features of a Fabric Interconnect is that you can configure the personalty of some of the ports. Generally, there are three types of ports on a Fabric Interconnect:

- Universal Ports – Operate as Ethernet or Fibre Channel ports

- Ethernet Ports – For Ethernet or FCoE connectivity

- Uplink Ports – for uplink to the Cisco Nexus Infrastructure

The Universal Ports are the first ports of the Fabric Interconnect. In the case of the Cisco UCS 6454 Fabric Interconnect, they are the first 8 ports. Here is a diagram of the ports on the Cisco UCS 6454 Fabric Interconnect labeled:

You can find a deep dive explanation of the Cisco UCS 6454 Fabric Interconnect here.

Now, back to the ports on our Cisco Fabric Interconnect. Why am I mentioning this? Because if you are going to use Fibre Channel you are going to have a bad time if you do not cable correctly up front! You must use the first eight ports for Fibre Chanel on the 6454 Fabric Interconnect. Hopefully I just saved someone out there the horror of having to re-cable their Fabric Interconnect!

Then we have Ethernet ports which you can use at varying speeds, of course, because Cisco UCS is so easy to customize, and finally uplink ports to connect to your Nexus infrastructure.

Cisco UCS VIC (Virtual Interface Card)

Think of the VIC as the Cisco UCS Network card, except for one minor detail. Notice how it is named a VIC not a NIC? That is because there is powerful abstraction and virtualization layer at play here. UCS Manager can be used to make the VIC appear as up to 256 virtual devices. Pretty cool right? Say goodbye to port constraints for things like VMware vSphere on your server, you can configure them however you want!

In a rack server configuration, the VIC card would connect either to the Fabric Interconnect or a Fabric Extender (FEX), which we will soon learn more about.

In a blade server configuration, the VIC card connects to the FEX which resides in the back of the blade chassis, it cannot connect directly to the Fabric Interconnect.

For more information on Cisco UCS VICs, be sure to check out the Cisco UCS VIC 1400 Deep Dive. The VIC 1400 series are the VICs specially designed for the Cisco UCS M5 generation of hardware.

Cisco UCS Blade Server Chassis

The Cisco UCS Blade Server Chassis is called the Cisco UCS B5108 chassis. It is stupid, and meant to be this way. It provides power to your blade servers, and has slots to add FEXes for connectivity. That is its only job, as it should be. There are 8 slots in a UCS B5108 Chassis.

Cisco UCS FEX (Fabric Extender)

There are two types of Cisco FEXs or Fabric Extenders in the Cisco UCS world:

- Blade Chassis FEX

- Rack Mount FEX

Now, we are going to take a look at each of them.

Blade Chassis Cisco FEX

If you are using blade servers, you must have FEXes in the back of it to provide connectivity to the Cisco UCS system. The FEXes are connected to the Fabric Interconnects. There are two FEX slots in the Blade Chassis, one for A side connectivity and one for B side connectivity. FEX A connects to Fabric Interconnect A, and FEX B connects to Fabric Interconnect B.

The Fabric Interconnects are not cross connected, like your instinct may tell you to do. Failure of a FEX or Fabric Interconnect is handled at the software layer, by either the software running on your blades, or by UCS Manager, it is your choice!

Rack Mount Cisco FEX

This operates with a similar principle as the Blade Chassis FEX, except it is mounted in a rack. The purpose of this is to add more ports to your Fabric Interconnect so you can take advantage of the Cisco UCS’s full potential. 54 ports can get eaten up pretty quickly, and this is Cisco’s answer to that problem.

Cisco UCS B-Series Blade Servers

A blade server is, well, a blade server. If you see a B in front of a UCS Server name, for example the Cisco UCS B200 M5, you automatically know that model is a blade server. It contains all of the things a server should, and is given power and connectivity by the Cisco UCS 5108 Blade Chassis.

The Cisco Blade Chassis also contains a VIC card (or two!) for connectivity. There are single width, double width, and double width double height blades available. Blades are placed into the Cisco UCS 5018 blade chassis horizontally, so with the 8 available slots you can have 8 single width blades, 4 double width blades, or 2 double width double height blades.

The M5 generation of B-Series Blade Servers brought all sorts of great features to UCS, most notably upgrade processors and increased memory capacities. The UCS B200 M5 is really the work horse of the Cisco UCS platform at this time.

Cisco UCS C-Series Rack Servers

You guessed it, a rack server is a rack server, and contains everything it should. If you see a C in front of a UCS Sever name, such as the Cisco UCS C220 M5, you know you are working with a rack server. It contains a VIC card (or cards) for connectivity, and connects directly to the Fabric Interconnect or a Rack Mount FEX.

You can find a deep dive of the Cisco UCS C220 M5 rack server here. If you are interested in Artificial Intelligence (AI) or Machine Learning (ML), be sure to check out the Cisco UCS C480 ML which was purpose built for these applications.

A Word on Un-managed Cisco UCS

I want to talk about “un-managed Cisco UCS” for a moment. This is basically Cisco UCS without UCS Manager or Fabric Interconnects. You can totally deploy rack servers only like this, but why would you? Unfortunately I know this answer from experience. It is all about size and cost of your environment.

If you are deploying a two node VMware vSphere cluster and have limited space, chances are adding the Fabric Interconnects are going to be overkill for this project. This configuration is usually seen in remote and branch offices.

Why it is a very valid use case, it makes my heart a little sad, because Cisco UCS Manager is the best thing ever.

Un-managed Cisco UCS is super easy to deploy however, because it pretty much follows the architecture of every other rack server deployment out there.

Instead of Cisco UCS Manager, Cisco IMC or Integrated Management Controller is used to manage your server. While this is similar to the functionality the management software other rack server vendors provide, it is very powerful and easy to use.

Cisco UCS Manager

Finally, let’s talk briefly about Cisco UCS Manager (since I could probably write a dissertation on it). Cisco UCS Manager is the software secret sauce that make Cisco UCS so awesome.

You know what VMware does for Virtual Machines? Well, Cisco UCS Manager does that for hardware. My hands down favorite feature is something called a service profile. A Service Profile is basically your blade’s hardware configuration, from mostly a network connectivity perspective.

For example, I may have an VMware vSphere ESXi service profile with six virtual NICs configured from my ESXi host. With a simple reboot, I can have that server come back up with a different service profile, like for a Microsoft Windows Server with two NICs. In this service profile, I can also put things like which VLANs will be accessed, names and connection order of the virtual NICs, boot order, and boot devices. The possibilities for customization are really endless.

Cisco UCS Manager also lets you manage all of the Cisco Servers you have connected to your Fabric Interconnect pair in one handy interface. The servers that a pair of Fabric Interconnects can support is called a UCS Domain.

Along with the Cisco UCS 6454 Fabric Interconnect comes the Cisco UCS Manager 4.0 code line. You will almost always see a new release of Cisco UCS Manager when new UCS hardware is introduced.

Overview of A Sample Cisco UCS Architecture

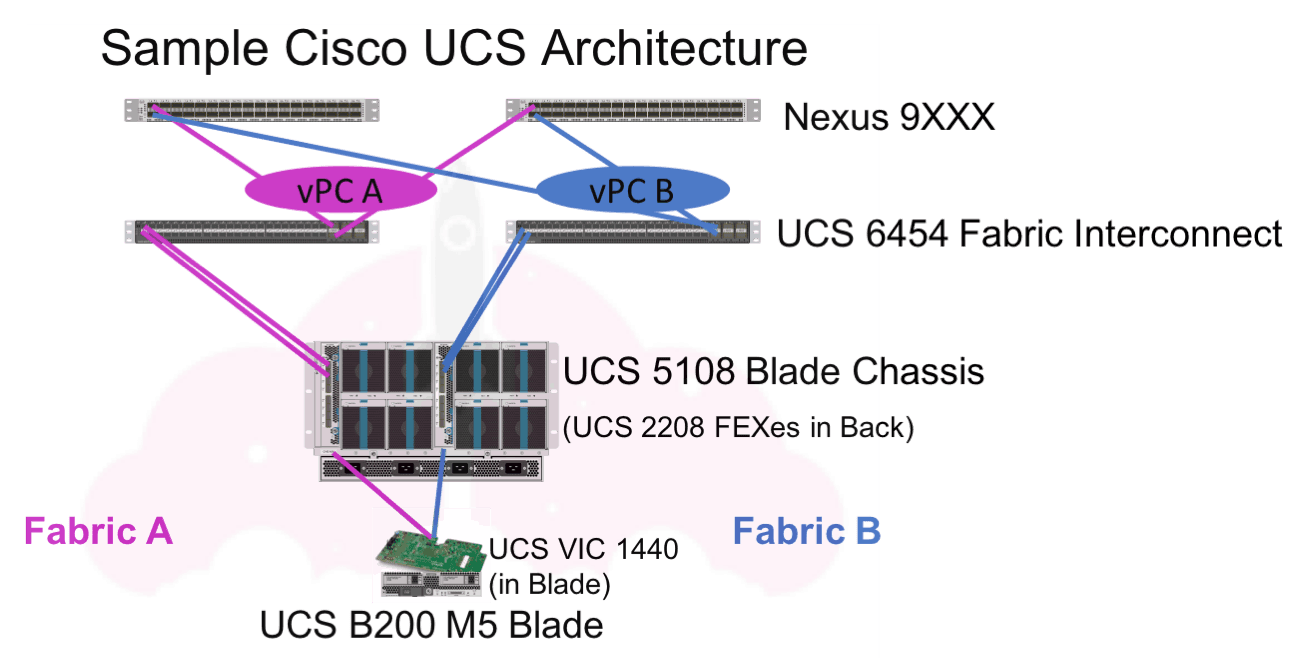

Now that we have talked about all of the components, and showed a high level overview, I want to show you what a UCS Blade Server deployment would look like with the above components. Stay with me, because sometimes things with blade servers can get confusing if you have not worked with them before. We are going to examine this diagram from the top down:

(Note: please don’t copy this architecture and deploy it in production! There is way more to the UCS design process that we have not talked about yet!)

Cisco Nexus Switch

This is a plain old Cisco Nexus switch we all know and love. How it uplinks to the rest of your environment is up to you, but the Cisco UCS Fabric Interconnects require a Nexus switch for upstream connectivity.

Cisco UCS 6454 Fabric Interconnects

The Fabric Interconnects connect to the rest of your environment via uplink ports to the Nexus switches. You will need to determine how many uplinks to use based on your bandwidth requirements, which is a topic we have yet to talk about, but very important.

To connect to the Cisco Nexus switches we use vPCs or virtual PortChannels. This is simply a PortChannel across the two Nexus switches you are connecting to to provide redundant. If one of the upstream Nexus switches fails, your UCS System will still be operational.

Cisco UCS 5108 Blade Chassis

Where your blades live! It provides power and connectivity from your blade’s VIC card to the Fabric Extender, which in turn connects to the Fabric Interconnect.

Cisco UCS 2208 Fabric Extenders

The Fabric Extender is what allows your blade to connect to the Cisco UCS Fabric Interconnects. These models specifically are compatible with the blade chassis and Fabric Interconnect we are using.

Cisco UCS VIC 1440

The VIC for your blade server, which can be carved up into up to 256 virtual adapters. You cannot use the same VIC in a rack mount server as you can in a blade server. While they provide the same functionality, the models are different.

Cisco UCS B200 M5 Blade Server

Finally! We have gotten to our server. The Cisco UCS B200 M5 blade server is a great workhorse, and completely customizable. Once everything has been racked, stacked, and connected, the next step is to connect to the Cisco UCS via UCS Manager and begin configuration.

The Flexibility of the Cisco UCS Architecture

This diagram is just one sample of what a Cisco UCS architecture may look like. You may have blade servers, rack servers, or a combination of both. You may also have different models of each server type, with different configurations of CPU and Memory. What your Cisco UCS Architecture looks like completely depends on your infrastructure’s requirements.

If you want to learn more about the architecture of Cisco UCS, I encourage you to check out the Cisco UCS Platform Emulator. The Cisco UCS Emulator allows you configure a virtual hardware configuration, then configure the virtual UCS hardware and manage it with Cisco UCS Manager. It is very easy to get started with, and is not resource intensive. You can run it right on your laptop or desktop.

You can find out more about the Cisco UCS Platform Emulator with these guides:

Setup and Use of the Cisco UCS Platform Emulator

Tips and Tricks for Using the Cisco UCS Platform Emulator

Updating Your UCS Platform Emulator

Changing Fabric Interconnects on the UCS Platform Emulator

Getting Started With Cisco UCS Platform Emulator Version 3.2(3ePE1)

Cisco UCS Deep Dives

I have written a number of deep dives on Cisco UCS to help you learn more.

- Cisco UCS 6454 Fabric Interconnect Deep Dive

- Cisco VIC 1440 Deep Dive

- Cisco UCS C220 Deep Dive

- Cisco UCS C480 ML Deep Dive

- Cisco UCS C4200 and C125 M5 Deep Dive

- All About Cisco UCS Manager 4.0

Stay tuned for even more articles discussing all of the Cisco UCS architecture components.

An Overview of the Cisco UCS Architecture

I hope you have a better understanding of the Cisco UCS Architecture after reading this article. This article serves to be a very basic overview of what the Cisco UCS architecture consists of, and how it differs from a traditional server architecture. If you want to learn how to really architect a Cisco UCS environment, there are many more considerations such as:

- Sizing network uplinks

- Sizing FEX to Fabric Interconnect connections

- Sizing servers

- Designing and Configuring service profiles

Cisco UCS architecture is fundamentally different than any other server architecture out there. This provides a number of advantages such as streamlined management of the infrastructure stack, and the power of UCS Manager’s service profiles for hardware abstraction. When a Cisco UCS environment is designed correctly, it can provide the utmost in availability and performance after a simple initial configuration.

Melissa is an Independent Technology Analyst & Content Creator, focused on IT infrastructure and information security. She is a VMware Certified Design Expert (VCDX-236) and has spent her career focused on the full IT infrastructure stack.